Assessing the state of Kube with Buildbot

Over the past weeks I’ve been busy improving our continous integration (CI) system for Kube. In particular, I’ve set up a Buildbot instance to build, test, benchmark and deliver Kube.

Buildbot

Buildbot is a nifty CI “framework”. It’s not so much a CI “system” as it really isn’t much more than a framework to build your own system. It more a set of python libraries that makes it fairly straightforward to build your own CI system, once you get to grips with what Buildbot exactly provides.

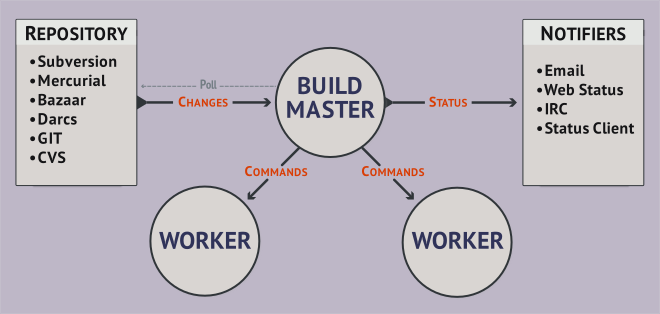

A Buildbot setup typically consists of:

- A master: The job scheduler that can triggers and distributes various tasks.

- A set of workers: Each worker is a process that can be on the same host as the master or somewhere else. Workers do nothing else than executing a command they received and returning the result.

- A set of builders: For whatever you want to do within the CI you create a builder. A builder can have multiple steps, that describe how to build the end result.

- A set of schedulers: Schedulers are part of the master and trigger builders according to a policy (manual trigger, nightly build, waiting on some external trigger, …)

A buildprocess works like this:

- A scheduler triggers a (or a set of) builders. (E.g. nightly build at 2 am)

- The master assigns a worker to the builder, from the workers which are specified to match the builder (e.g. because a windows builder will need a windows worker running on a windows host).

- The builder then runs it’s configured steps via that worker. So it sends command by command to the worker and waits for it’s completion and receives the results/logs.

Buildbot comes with a little webserver and a dashboard (based on Python Flask) that can be freely extended and provides the main interaction point with Buildbot.

Interaction alternatives exists with an IRC bot and email notifications, and you can of course roll your own interface doing whatever you want.

I quite like the overall experience. It’s certainly much harder initially to figure out how everything works, compared to something like jenkins. But once you know the basics it’s also fairly straightforward, and the configuration is more like software development than configuring a system (which suits me as a software developer).

The system also becomes very reproducible, I can simply clone the git repository and setup the python virtual env on my laptop and have my CI to go.

Buildbot for Kube

For Kube we’re interested in the following things:

- Ensuring the build passes.

- Ensuring the tests pass.

- Regular benchmark runs and tracking of the results.

- Building and delivery of nightly builds.

- Builds on other platforms.

By now we have the first three parts covered. Kube is built, tested and the benchmarks are run every night.

We track the benchmark results with HAWD (How Are We Doing; a small framework to collect benchmark results, currently living in the sink repository), and render graphs from those results on a Buildbot-dashboard using Chart.js. This already greatly helps manual assessment of performance characteristics, and it would be great to complement that with some automatic boundary-checking.

The graph in the middle demonstrates recent performance improvements by temporarily reverting a commit.

Flatpak nightlies are built every night, we lack automatic publishing yet though.

Among the next steps we have:

- Making the Buildbot instance publicly available.

- Automatic publishing of nightlies.

- Builds on Windows and OSX.

Sources can be found here.

For more info about Kube, please head over to About Kube.